When most people hear the word automation, they immediately think of speed.

Faster deployments. Faster testing. Faster data processing.

But speed is not the real value of automation.

The true purpose of automation in the tech industry is to reduce human error — the small, repetitive, often invisible mistakes that compound into outages, security risks, data corruption, and operational instability.

Automation is fundamentally about building systems that behave predictably. It is about ensuring that critical processes do not depend on memory, mood, urgency, or manual precision. In high-scale digital environments, even minor inconsistencies can cascade into major failures. Automation minimizes those inconsistencies.

This article clarifies what automation actually solves, why it matters beyond productivity, and how modern engineering teams should think about it.

The Common Misconception: Automation Equals Speed

It is easy to assume that automation exists to help teams move faster. Continuous integration pipelines run tests automatically. Deployment scripts push code in seconds. Monitoring systems alert instantly.

Speed is a visible outcome.

But speed without consistency can create larger failures at a faster rate.

If a flawed manual process is simply accelerated, the organization does not become more stable — it becomes more fragile. Automation does not fix broken logic; it enforces defined logic. If the logic is poor, automation will replicate poor outcomes at scale.

The real advantage of automation is not acceleration. It is standardization.

Automation ensures that the same process runs the same way every time, without variation caused by fatigue, distraction, or oversight. It removes ambiguity from execution and replaces it with predictable workflows.

Speed is the surface benefit. Reliability is the structural benefit.

Human Error: The Invisible Risk in Technology

Human error in technology rarely comes from lack of intelligence. It usually comes from:

- Repetition fatigue

- Context switching

- Manual configuration steps

- Time pressure

- Inconsistent documentation

Even experienced engineers can:

- Miss a configuration flag

- Deploy to the wrong environment

- Forget a migration step

- Push untested code

- Misconfigure permissions

These are not skill failures. They are cognitive limitations.

Humans are excellent at problem-solving and creative thinking. They are not excellent at repeating the exact same mechanical task perfectly hundreds of times.

Cognitive science consistently shows that repetitive tasks increase the likelihood of small mistakes. In complex systems, those small mistakes can result in:

- Downtime

- Data inconsistencies

- Security vulnerabilities

- Customer trust erosion

Automation exists to compensate for that limitation. It acts as a system-level safeguard against predictable human variability.

Automation as a Reliability Strategy

Modern systems are complex. Cloud infrastructure, microservices, container orchestration, CI/CD pipelines, third-party APIs — each layer introduces configuration points.

Manual management of such environments increases the probability of inconsistency.

Automation improves reliability by:

- Enforcing predefined workflows

- Removing manual intervention from critical processes

- Ensuring consistent environment configuration

- Automatically validating outputs

For example:

An automated deployment pipeline ensures that code passes testing before reaching production. Without automation, someone might skip testing under deadline pressure.

Automation acts as a guardrail, not just an accelerator.

When reliability becomes automated, trust in systems increases. Teams deploy more confidently because processes are verified automatically. Reliability is not dependent on individual memory — it is embedded in the workflow.

Standardization Over Acceleration

Consider infrastructure provisioning.

Manually setting up servers introduces variation. Slight differences in configuration accumulate over time. One server might have a missing package. Another might have different permissions.

Infrastructure as Code (IaC) solves this by defining infrastructure in version-controlled scripts. Every environment is built identically.

The benefit is not primarily speed. It is predictability.

Predictability reduces:

- Production inconsistencies

- Debugging time

- Configuration drift

- Security vulnerabilities

Automation creates uniformity across systems.

Uniform systems are easier to scale, audit, and maintain. When environments are reproducible, disaster recovery becomes simpler. Scaling operations becomes structured instead of reactive.

Standardization reduces chaos. Chaos increases error probability.

Automation in Testing: Preventing Regression

Manual testing can miss edge cases. Even thorough QA teams cannot retest every scenario after every update.

Automated test suites ensure that:

- Core functionality remains intact

- Known bugs do not reappear

- Performance thresholds are monitored

- Edge cases are consistently validated

Again, speed is a byproduct. The real advantage is regression prevention.

A fast release with hidden defects is more expensive than a slower release with stability guarantees.

Automated testing builds institutional memory into the codebase. It ensures that lessons learned from past bugs are preserved as test cases. Without automation, teams rely on recollection. With automation, knowledge becomes executable.

That is long-term risk reduction.

Security and Compliance: Reducing Risk

Security misconfigurations are often caused by manual processes:

- Incorrect firewall rules

- Misconfigured access permissions

- Forgotten updates

- Expired certificates

Automation can enforce security baselines:

- Automated vulnerability scans

- Role-based access templates

- Continuous compliance checks

- Scheduled patch updates

When security depends entirely on manual vigilance, risk increases. Automation transforms security from reactive to systematic.

Instead of discovering issues after a breach, automated systems detect anomalies continuously. Instead of hoping updates are applied consistently, patching can be scheduled and verified automatically.

In regulated industries, automation also ensures audit readiness. Compliance evidence becomes reproducible rather than manually compiled.

The Psychological Benefit of Automation

Beyond technical stability, automation reduces mental load.

Engineers working in highly manual environments spend energy remembering procedural steps. This increases stress and decreases cognitive capacity for higher-level thinking.

When repetitive tasks are automated:

- Engineers focus on architecture and optimization

- Strategic thinking improves

- Creativity increases

- Burnout decreases

Automation shifts human effort from repetition to innovation.

Reducing operational anxiety also improves team morale. When engineers trust deployment systems and monitoring pipelines, they feel safer making improvements. Psychological safety grows when processes are dependable.

Automation is not just a technical tool. It is an organizational stabilizer.

Where Automation Should Not Replace Humans

Automation is not a substitute for judgment.

It should not replace:

- Strategic decision-making

- Architecture design

- Ethical considerations

- Complex problem diagnosis

Automation executes predefined logic. Humans define that logic.

Over-automating without understanding process fundamentals can introduce large-scale failures. A flawed automated system replicates errors at scale.

Blind trust in automation can also create complacency. Systems must be monitored, reviewed, and periodically audited. Automation reduces routine error — it does not eliminate responsibility.

The healthiest automation strategies balance human oversight with mechanical precision.

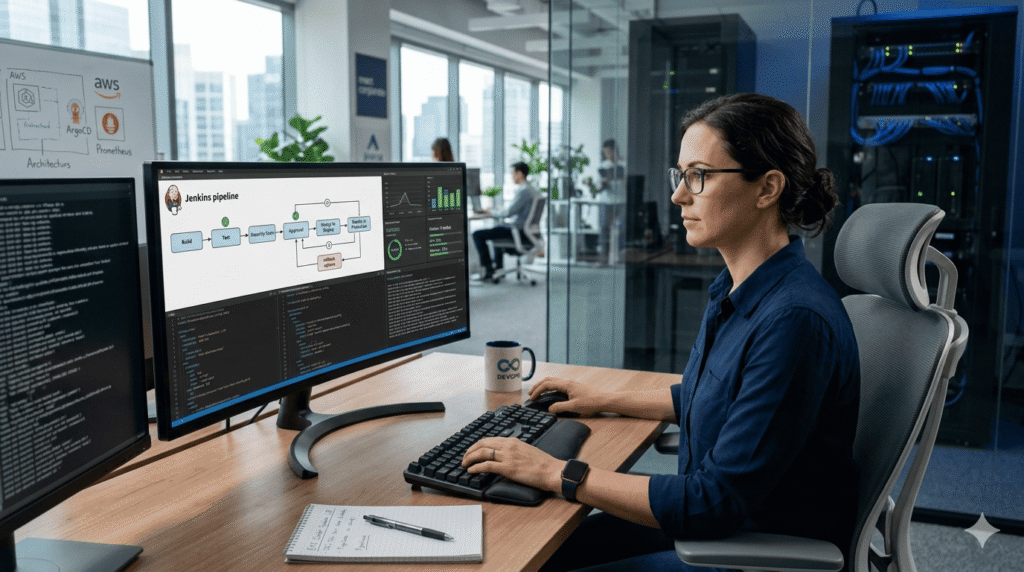

The Role of DevOps in Automation Thinking

The rise of DevOps culture emphasizes:

- Continuous integration

- Continuous deployment

- Monitoring and feedback loops

- Infrastructure as Code

These practices are often associated with faster releases. But their foundational goal is reliability and repeatability.

DevOps succeeds not because it speeds things up, but because it reduces deployment-related uncertainty.

Consistency builds trust in systems.

By integrating automation into development workflows, DevOps reduces the friction between development and operations teams. Instead of manual handoffs, pipelines enforce shared standards.

The result is fewer surprises in production and fewer emergency fixes.

AI and the Next Phase of Automation

Artificial intelligence is expanding automation capabilities. From automated code generation to anomaly detection, AI can reduce human intervention even further.

However, the same principle remains:

AI is valuable when it reduces error probability, not when it simply increases output volume.

Automated decision systems must be audited, monitored, and validated. Otherwise, they amplify mistakes.

The next phase of automation will likely combine:

- Predictive monitoring

- Self-healing infrastructure

- Intelligent error detection

- Automated incident response

But even as AI becomes more capable, human oversight remains critical. Automation should enhance decision-making — not replace accountability.

Reframing Automation in the Tech Industry

If organizations measure automation success purely by velocity, they risk overlooking its true benefit.

The correct metrics are:

- Reduction in production incidents

- Lower rollback frequency

- Fewer configuration inconsistencies

- Improved deployment reliability

- Reduced security vulnerabilities

Speed matters. But stability matters more.

Automation is not about moving fast. It is about moving consistently and safely.

Organizations that understand this build systems that scale sustainably instead of collapsing under growth pressure.

Conclusion: Automation as Error Reduction Infrastructure

Automation is often marketed as a productivity booster. In reality, it is an error reduction infrastructure.

It standardizes processes. It enforces consistency. It prevents avoidable mistakes. It reduces operational risk.

When implemented thoughtfully, automation frees humans to focus on what they do best — critical thinking, creativity, and strategic problem-solving.

In the long run, the most resilient technology systems will not be the fastest ones.

They will be the ones designed to minimize human error.

Reference and Further Reading

Google SRE Book – Reliability Engineering https://sre.google/sre-book/table-of-contents/

AWS DevOps Overview https://aws.amazon.com/devops/what-is-devops/

Microsoft – Infrastructure as Code https://learn.microsoft.com/en-us/devops/deliver/what-is-infrastructure-as-code

Google Cloud – CI/CD Overview https://cloud.google.com/architecture/devops/devops-tech-continuous-integration

NIST Risk Management Framework https://csrc.nist.gov/projects/risk-management/about-rmf

DevOps Research and Assessment (DORA) https://dora.dev/