Introduction

For many years, deploying applications was one of the most challenging parts of software development. Developers would build software on their local machines, only to discover that it behaved differently once deployed on servers. Differences in operating systems, dependencies, libraries, and configurations often caused applications to fail unexpectedly.

This problem became widely known as the classic development frustration: “It works on my machine.”

Modern software systems require consistent environments across development, testing, and production. Without this consistency, teams spend significant time debugging environment-related issues instead of building features.

The introduction of Docker changed this paradigm by enabling developers to package applications along with all their dependencies into portable containers. This approach made deployments more predictable, scalable, and efficient.

Today, containerization has become a foundational technology in modern cloud-native development.

The Deployment Challenges Before Containerization

Before container technology became popular, organizations relied on several traditional deployment approaches. Each had limitations that affected scalability and reliability.

Developers commonly deployed applications directly on physical servers or virtual machines. In such setups, different applications often shared the same system environment. This frequently caused dependency conflicts when multiple applications required different versions of libraries or runtime environments.

For example, one application might require Node.js version 16, while another might depend on version 18. Managing these conflicts on the same server could lead to instability.

Virtual machines provided some level of isolation by running complete operating systems. Platforms like VMware allowed teams to create separate environments for applications. However, virtual machines consumed significant system resources because each instance required its own operating system.

These limitations made deployment slower and infrastructure management more complex.

Consistent Development and Production Environments

One of Docker’s most significant contributions is environment consistency. Developers can define an application’s environment using a Dockerfile, which specifies the base system image, dependencies, and commands required to run the application.

When a container is built from this Dockerfile, it includes everything the application needs to function.

This eliminates differences between development and production environments. For example, a developer building a backend service using Python can package the correct interpreter version and required libraries inside the container.

As a result, teams experience fewer deployment failures caused by missing dependencies or incompatible system configurations.

Faster Deployment and Scalability

Containers start much faster than traditional virtual machines because they do not require booting a full operating system.

This speed makes them ideal for modern infrastructure where applications must scale quickly to handle varying workloads.

Container orchestration platforms such as Kubernetes can automatically launch additional containers when traffic increases and shut them down when demand decreases.

This dynamic scaling model allows organizations to efficiently manage infrastructure resources while maintaining application performance.

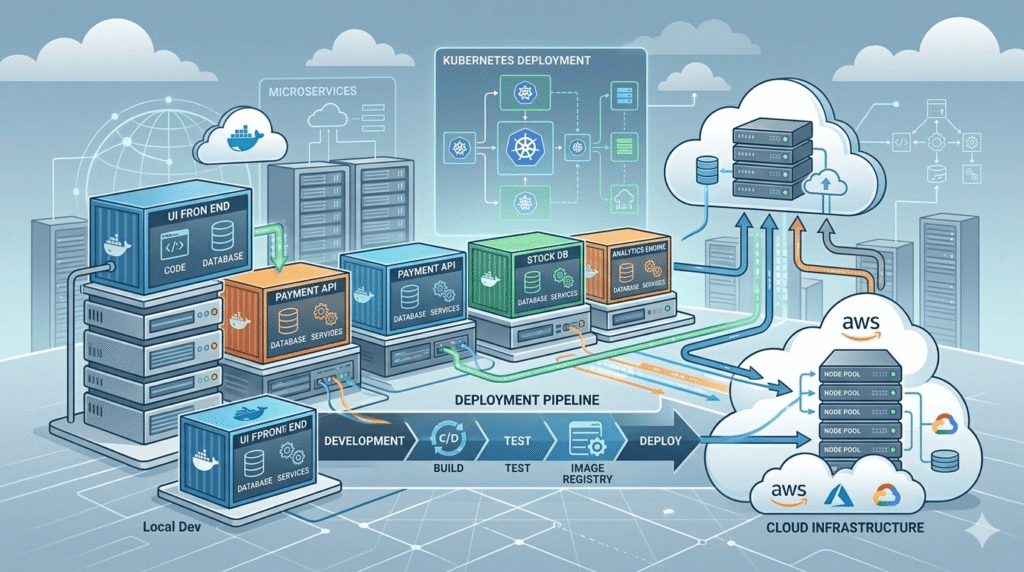

Companies building microservices architectures often rely on containers to deploy independent services that communicate with each other.

Improved Continuous Integration and Continuous Deployment (CI/CD)

Modern development workflows depend heavily on automation pipelines that build, test, and deploy software continuously.

Docker integrates naturally with CI/CD systems by providing a consistent environment for automated testing and deployment.

When developers push code to repositories hosted on platforms like GitHub, CI/CD pipelines can automatically build Docker images, run tests inside containers, and deploy applications if tests pass.

Tools such as Jenkins and GitHub Actions commonly use containers to ensure reliable build environments.

This approach reduces configuration complexity and increases deployment reliability.

Efficient Resource Utilization

Containers are lightweight compared to virtual machines because they share the host operating system’s kernel.

This allows multiple containers to run on the same server while consuming fewer system resources.

For example, a single cloud server can run dozens of containers without the overhead associated with running multiple operating systems.

Cloud providers such as Amazon Web Services and Google Cloud offer managed container services that allow organizations to deploy applications without managing underlying infrastructure.

Efficient resource utilization reduces operational costs and improves infrastructure scalability.

Enabling Microservices Architecture

Modern software systems increasingly adopt microservices architectures where applications are divided into smaller independent services.

Each service performs a specific function and communicates with others through APIs or events.

Containers make it easier to deploy and manage microservices because each service can run inside its own isolated container.

This approach simplifies updates and allows teams to deploy individual services without affecting the entire application.

For example, a web application might consist of separate containers for:

- the frontend interface

- the backend API

- the database service

- background processing jobs

Container orchestration systems such as Kubernetes manage communication, scaling, and availability of these services.

Conclusion

Application deployment has evolved significantly as software systems have grown more complex and distributed.

Traditional deployment methods often struggled with environment inconsistencies, dependency conflicts, and inefficient infrastructure usage.

The introduction of Docker transformed this landscape by making application environments portable, consistent, and easy to reproduce.

By enabling containerization, Docker has simplified deployment workflows, improved scalability, and supported the widespread adoption of cloud-native architectures.

Today, containers are a fundamental part of modern software infrastructure, helping organizations build reliable systems that can scale efficiently in dynamic environments.

References & Further Reading

Docker Documentation

https://docs.docker.com/get-started/overview/

Dockerfile Reference

https://docs.docker.com/engine/reference/builder/

Kubernetes Documentation

https://kubernetes.io/docs/concepts/overview/

GitHub Actions Documentation

https://docs.github.com/en/actions

AWS Containers Overview

https://aws.amazon.com/containers/

Microservices Architecture – Martin Fowler

https://martinfowler.com/microservices/