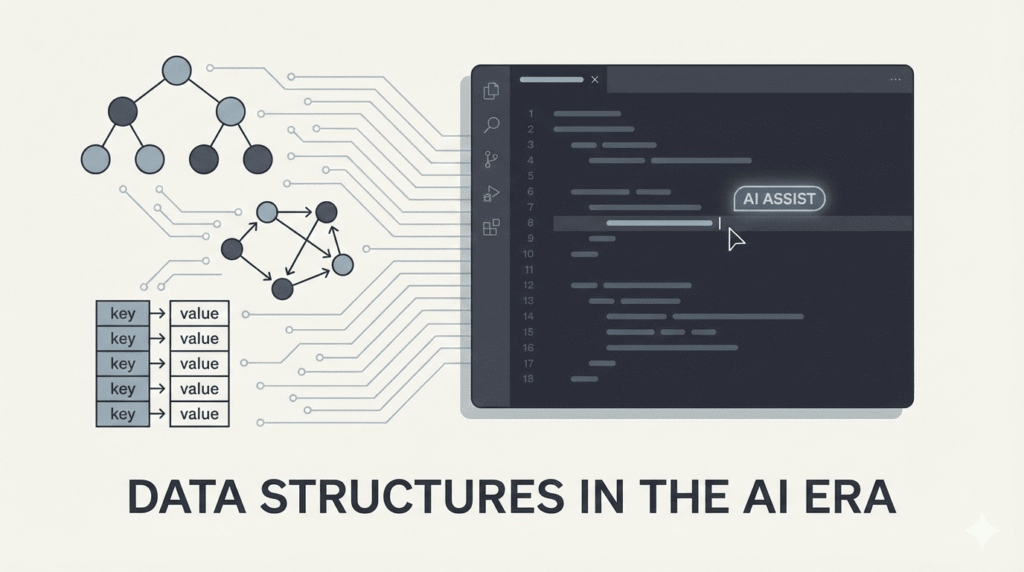

AI coding assistants can now generate functions, refactor code, scaffold APIs, and even suggest architectural patterns. For many developers, this raises a quiet question:

If AI can write the code, do fundamentals like data structures still matter?

The short answer is yes.

The longer answer is that they matter even more.

As implementation becomes easier, judgment becomes the differentiator. And data structures sit at the core of engineering judgment.

AI Can Generate Code — But It Doesn’t Own the Consequences

AI tools are excellent at producing syntactically correct implementations. Ask for a sorting function, a caching layer, or a tree traversal — and you’ll get something workable within seconds.

But AI does not own:

- Long-term performance trade-offs

- Memory constraints

- Scalability under real-world load

- Interaction patterns between components

- Maintenance complexity over time

Those responsibilities remain human.

Choosing the right data structure isn’t about writing loops correctly. It’s about anticipating how the system behaves as it grows.

Data Structures Shape Performance at Scale

Many performance problems don’t come from inefficient algorithms — they come from inappropriate data structure choices.

For example:

- Using arrays where hash maps are needed

- Overusing nested structures that increase lookup complexity

- Choosing linked structures when cache locality matters

- Ignoring space–time trade-offs

AI can generate solutions that “work.”

But understanding whether the solution scales requires foundational knowledge.

When systems grow from hundreds to millions of records, those decisions compound.

Modern Systems Still Depend on Classic Concepts

Despite new frameworks and abstractions, modern systems still rely on foundational structures:

- Databases use B-trees and LSM trees

- Caching systems rely on hash maps and eviction queues

- Distributed systems depend on consistent hashing

- Search engines leverage inverted indexes

- Message queues rely on efficient buffering strategies

Even when developers don’t implement these directly, they interact with them constantly.

Understanding the underlying structure improves decision-making across the stack.

AI Suggestions Are Only as Good as Your Evaluation

One of the hidden risks of AI coding tools is uncritical acceptance.

When AI suggests:

- A particular collection type

- A caching mechanism

- A graph representation

- A state management structure

Developers must evaluate:

- Is this optimal for expected load?

- What’s the time complexity under worst-case scenarios?

- How does this behave under concurrent access?

- What are the memory implications?

Without foundational understanding, developers risk approving solutions they cannot truly assess.

AI accelerates implementation.

It does not replace engineering reasoning.

Data Structures Teach System Thinking

Learning data structures isn’t just about interviews or textbook exercises.

It teaches:

- How to think about trade-offs

- How constraints shape design

- Why some problems demand specific structures

- How abstraction layers interact

These skills directly transfer to:

- API design

- Database schema decisions

- State management in frontend applications

- Distributed system architecture

In many ways, data structures are an early training ground for systems thinking.

Complexity Is Often Hidden — Until It Isn’t

Modern frameworks abstract complexity beautifully. But abstraction doesn’t eliminate cost — it hides it.

When applications begin to slow down or memory usage spikes, developers must look beneath the framework.

Understanding:

- Big-O complexity

- Memory allocation patterns

- Data access frequency

- Structural bottlenecks

Becomes critical for diagnosing issues effectively.

AI can suggest optimizations.

But without understanding the underlying structure, developers cannot confidently apply them.

The AI Era Raises the Bar, Not Lowers It

There’s a misconception that AI reduces the need for deep technical knowledge.

In reality, it increases the premium on judgment.

As routine implementation becomes automated:

- Architectural decisions become more visible

- Performance trade-offs become more consequential

- Design errors propagate faster

- Review responsibility becomes heavier

Developers who understand data structures can guide AI tools effectively. Those who don’t may simply generate more complex problems at higher speed.

Why Fundamentals Are Becoming a Competitive Advantage

In an environment where many engineers rely on similar AI tools, differentiation comes from:

- Recognizing when a generated solution is inefficient

- Refactoring structures proactively

- Designing systems with scalability in mind

- Avoiding hidden algorithmic traps

Fundamentals become leverage.

They allow developers to use AI as an amplifier — not a crutch.

Closing Insight: Tools Change. Principles Endure.

Programming languages evolve. Frameworks rise and fall. Tooling becomes more intelligent.

But the principles governing data organization, access patterns, and computational efficiency remain stable.

Data structures still matter because systems still run on data.

And in the age of AI coding tools, the engineers who understand the foundations are the ones who will build systems that scale, endure, and perform — long after the autocomplete finishes its suggestion.

References & Further Reading

- Introduction to Algorithms — Cormen et al.

https://mitpress.mit.edu/9780262046305/introduction-to-algorithms/ - Designing Data-Intensive Applications — Martin Kleppmann

https://dataintensive.net/ - Big-O Notation Explained — Khan Academy

https://www.khanacademy.org/computing/computer-science/algorithms - Google Engineering Practices Documentation

https://google.github.io/eng-practices/ - Stanford CS106B Data Structures Course Materials

https://web.stanford.edu/class/cs106b/